WHIM

A unified desktop and mobile ecosystem for voice AI, local LLM chat, weather radar, APRS radio, geofencing, IoT control, and vehicle integration. No cloud dependencies. No subscriptions. Your hardware, your models, your rules.

A unified desktop and mobile ecosystem for voice AI, local LLM chat, weather radar, APRS radio, geofencing, IoT control, and vehicle integration. No cloud dependencies. No subscriptions. Your hardware, your models, your rules.

Whim is a Python desktop application (Tkinter) with 18 module tabs organized across two rows. It connects to local LLMs through Ollama (DeepSeek R1:32B, Llama 3.1:8B), drives a voice pipeline with XTTS v2 text-to-speech and wake word detection, controls Samsung SmartThings devices, integrates Signal and Discord messaging, runs a weather radar with NEXRAD overlay, monitors APRS amateur radio stations, tracks livestock via geofencing, and manages Android devices through ADB.

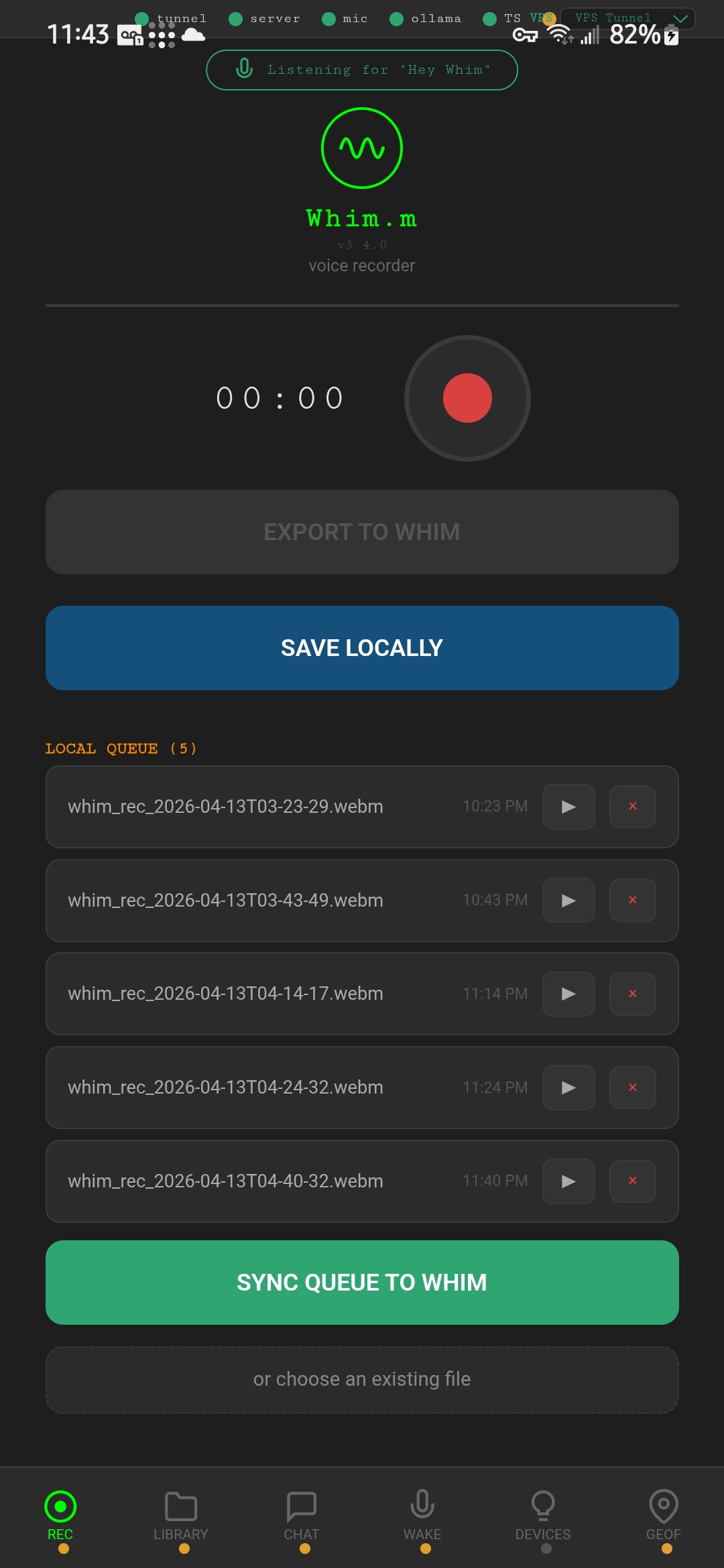

A companion mobile app (Whim.m) runs on Android phones and tablets, connecting over a reverse SSH tunnel through a VPS or directly via Tailscale mesh VPN. A vehicle dashboard interface (Whim.v) runs on a 13.6" Android head unit with its own local model for offline autonomy.

All AI inference is local. All data stays on your network. The system works in a tunnel, in a field, in a garage -- anywhere your hardware lives.

Every tab is a standalone module. Pick the ones you need, disable the rest. Build your own stack.

Full AI chat with local Ollama models. System presets, observability panel, context metering, tool trace, output templates, and token usage tracking. Switch between DeepSeek R1:32B (reasoning), Llama 3.1:8B (fast chat), and Qwen (tool use) from the header dropdown.

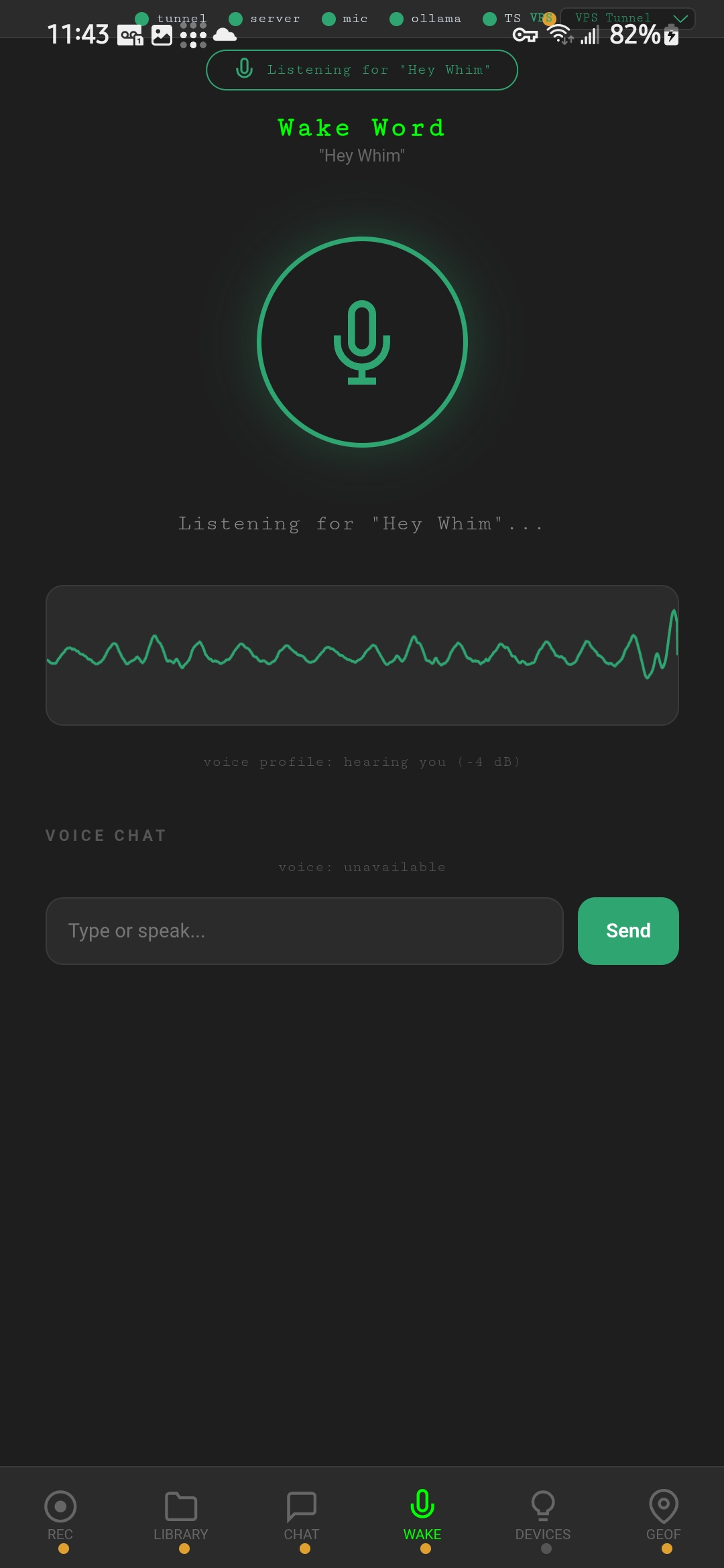

Wake word detection (openWakeWord / Porcupine), live spectrogram (Whim-Scope), dynamic gain, high-pass filter, AGC, parametric EQ, spectral subtraction, VAD, and a confidence ghost bar. Tuned for noisy vehicle and outdoor environments.

XTTS v2 text-to-speech with custom speaker references. Persona manager with coined response playlists per voice clone, confidence-gated, context-aware, pre-rendered. Spectrogram visualization and Table Reads output.

Embedded OSM tile map with NWS NEXRAD precipitation overlay. Open-Meteo conditions, 7-day forecast, anemometer, NWR audio via web stream and RTL-SDR. Severe weather alerts pushed to mobile devices.

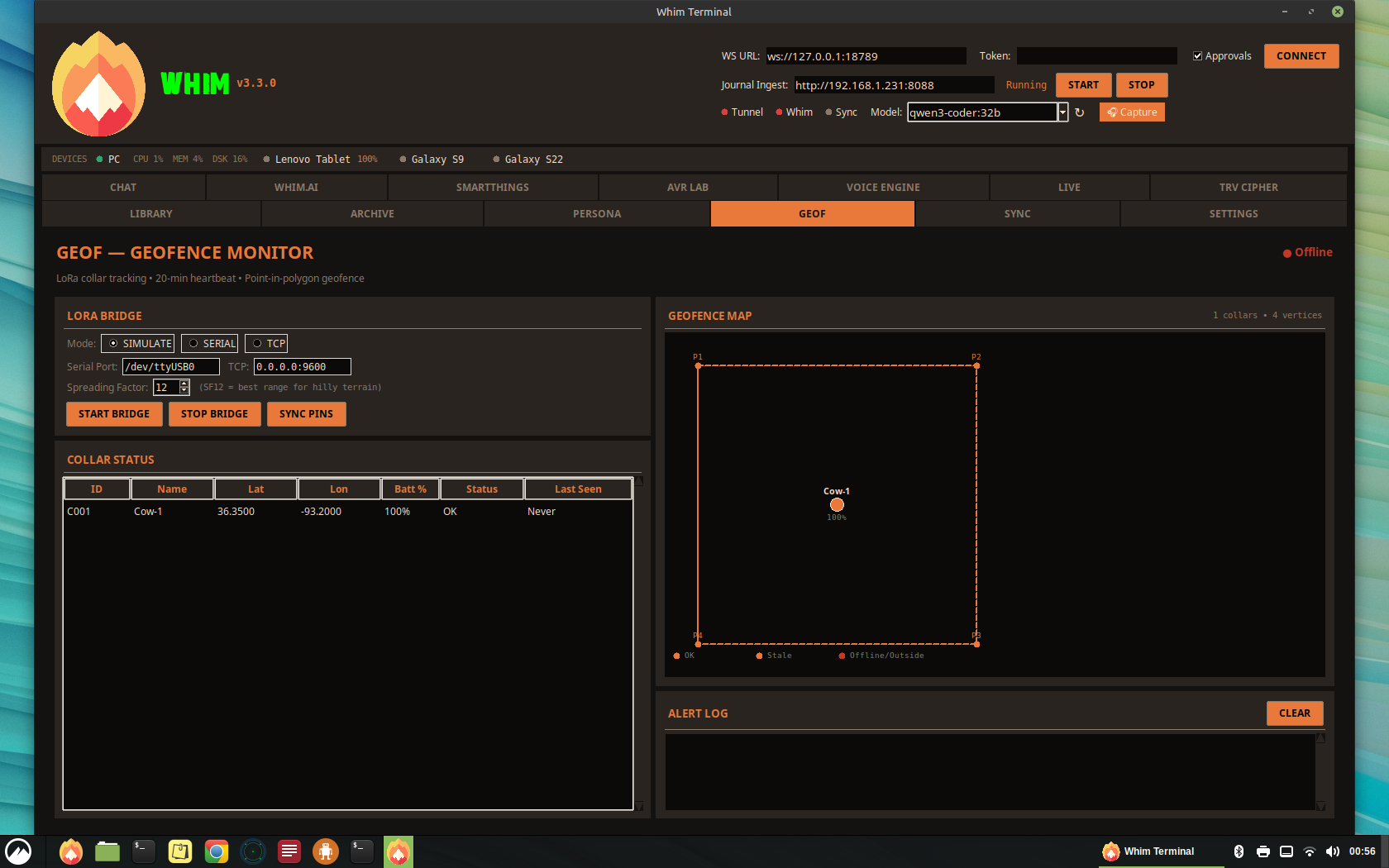

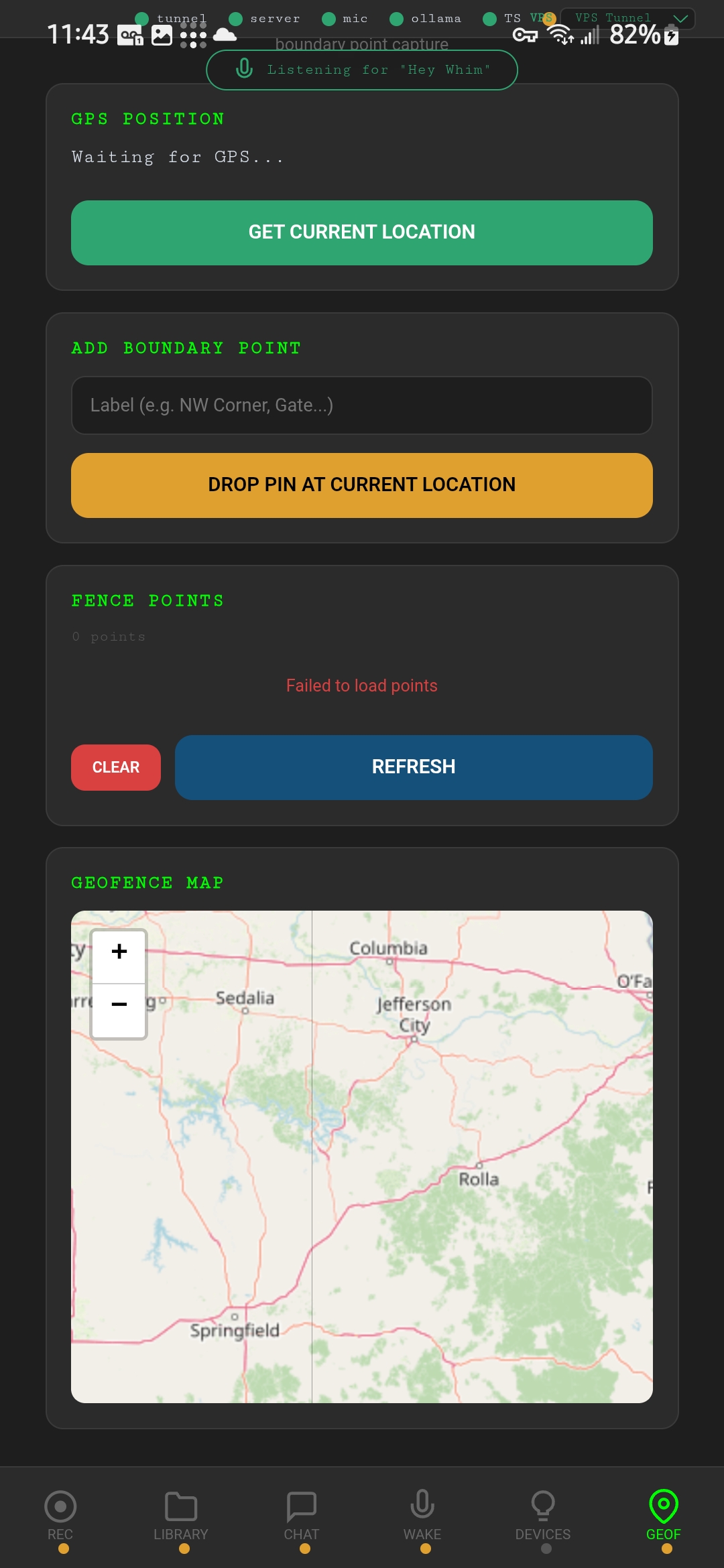

Canvas map with fence pin management, collar status table, LoRa bridge integration, and 20-minute heartbeat monitor. Originally built for livestock tracking on rural property.

Amateur radio station monitor with embedded tile map, Direwolf integration (KISS/AGWPE/simulate), station list with distance filtering, base station config, and raw packet log.

Samsung SmartThings device browser with scan, filter, favorites, device detail, and recently-controlled history. Control lights, locks, and sensors from the terminal.

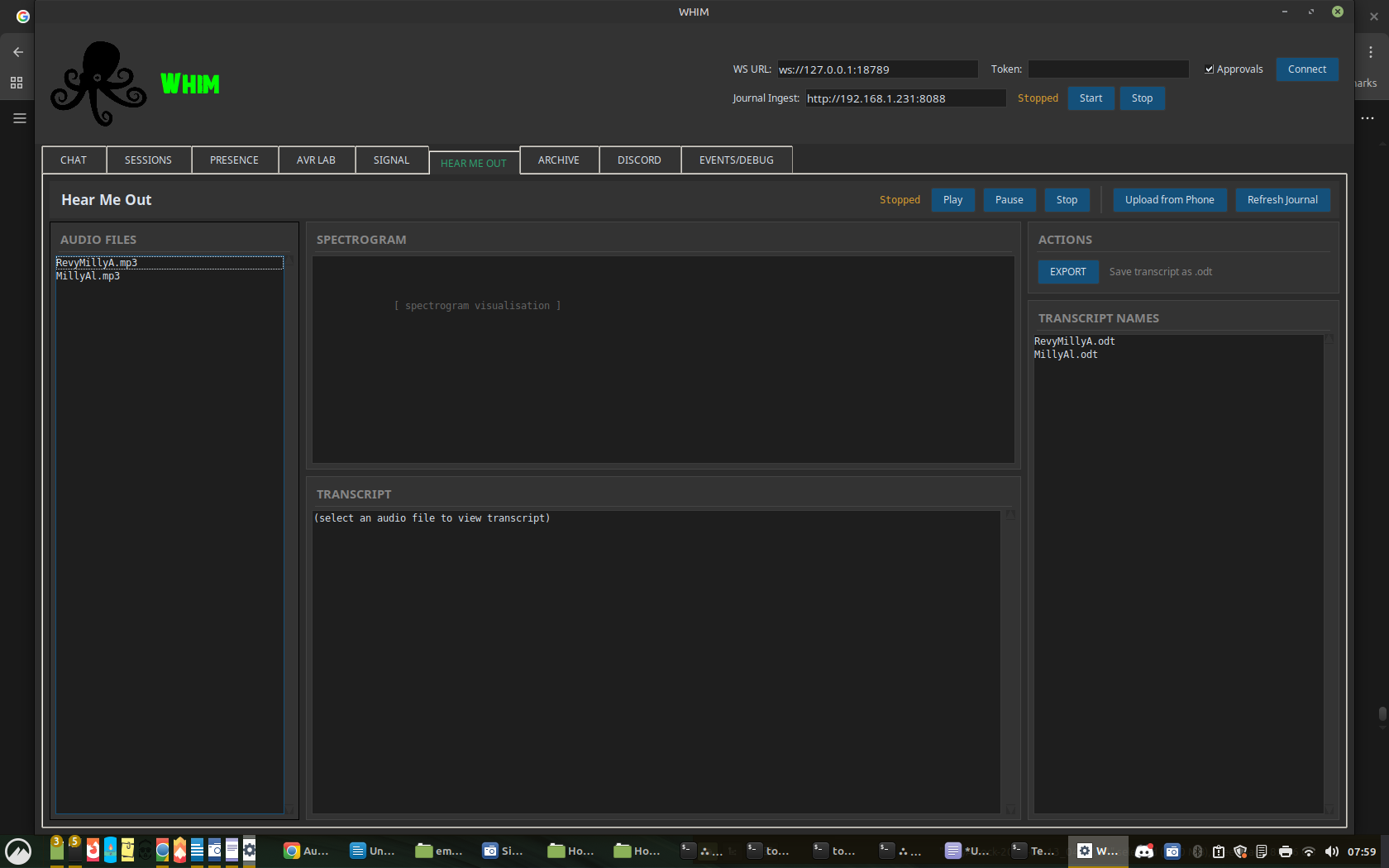

Audio transcription workstation with spectrogram, playback transport, Whisper-based transcription, ODT export, and scrub tools. Process voice recordings into searchable text.

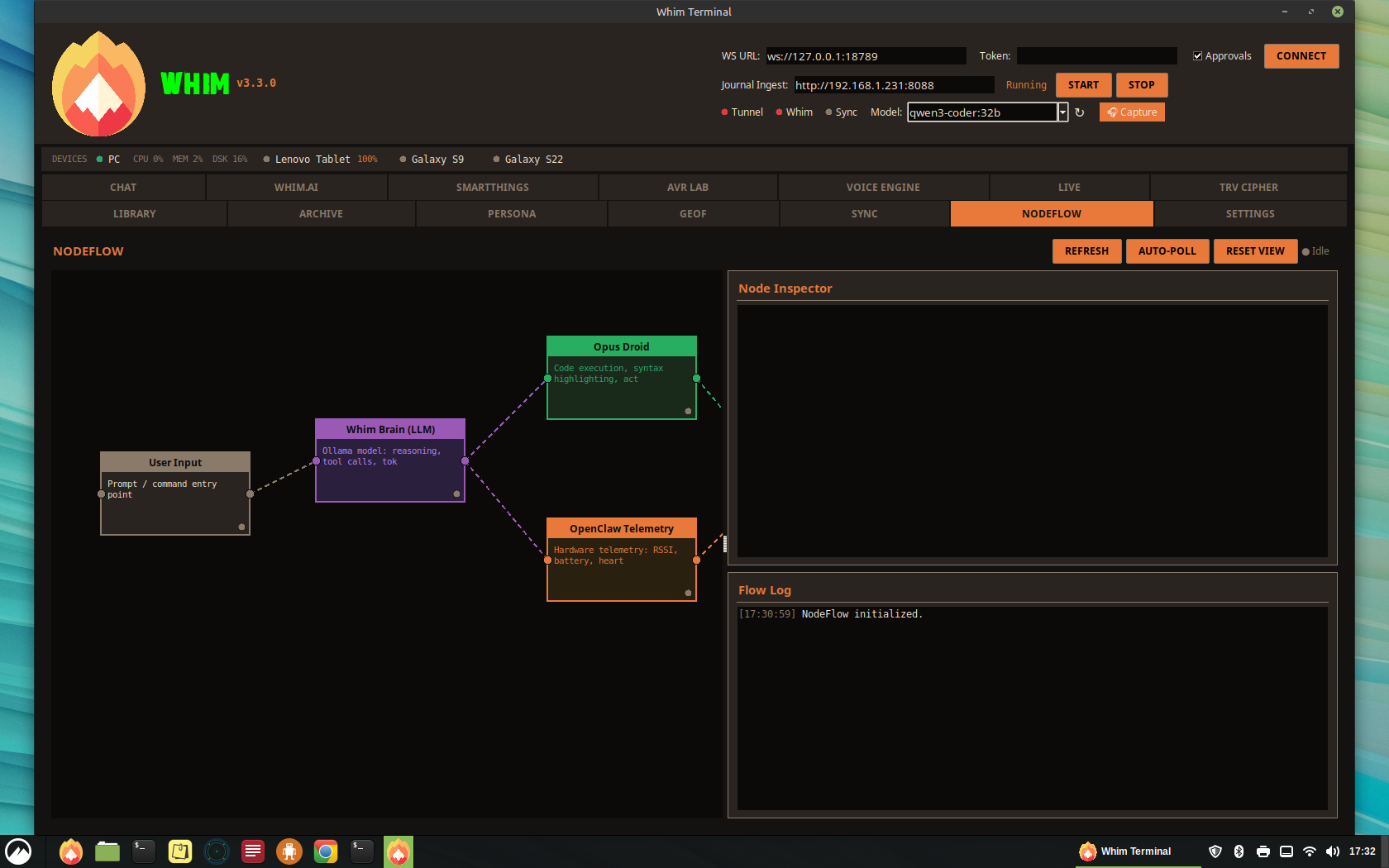

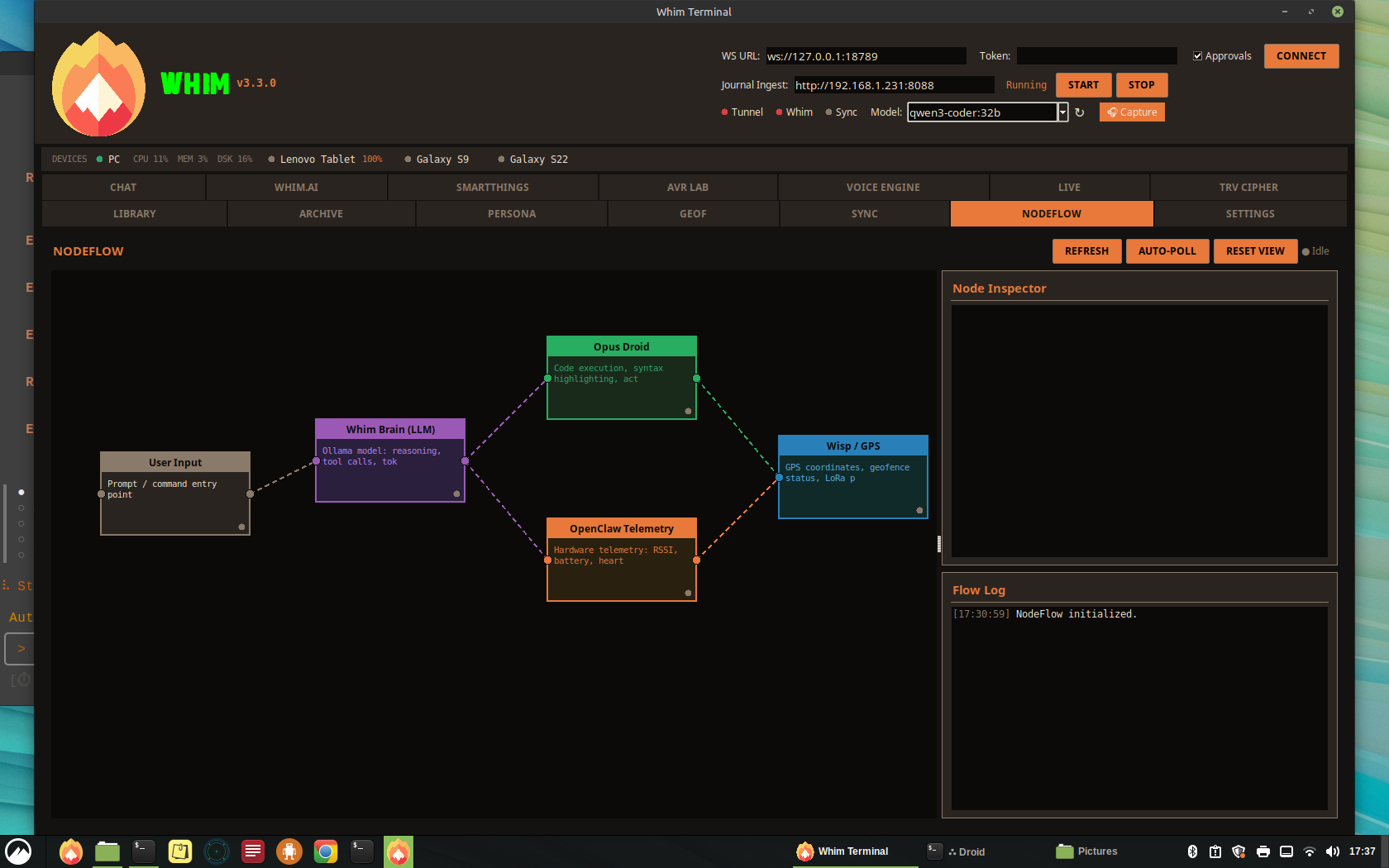

Visual node-based flow editor showing active droids, LLM reasoning chains, OpenClaw telemetry, and data flow connections. Drag-and-drop canvas with auto-poll and node inspector.

Integrated messaging through Signal Desktop (via signal-cli) and Discord bot. Send and receive messages directly from the terminal.

MJPEG screen share server with QR code pairing, phone camera feed, and desktop preview. ADB portal for APK installation, emulator management, and device screenshots.

JSON-RPC over WebSocket (port 18789). The AI agent protocol layer connecting the terminal to Ollama inference with model switching, context injection, tool definitions, and multi-model routing.

The voice pipeline uses state-aware backchannel cues -- color-coded, timed, and honest about what the system is actually doing.

A federated mesh of nodes. Each device operates autonomously, syncs when connected, stays independent when offline.

| Device | LAN IP | Tailscale IP | Role |

|---|---|---|---|

| PC (CARRAMint) | 192.168.1.231 | 100.69.17.20 | Server hub |

| Galaxy S22 | -- | 100.77.59.2 | Primary mobile |

| Galaxy S9 | 192.168.1.198 | 100.97.96.1 | Secondary mobile |

| Lenovo Tablet | 192.168.1.112 | 100.64.255.124 | Tablet client |

| Channel | Protocol | Port | Purpose |

|---|---|---|---|

| OpenClaw Gateway | WebSocket | 18789 | Core command bus |

| Whim.m Server | HTTP | 8089 | Mobile app backend |

| Journal Ingest | HTTP multipart | 8088 | Voice recording upload |

| Screen Share | MJPEG | 8091 | Desktop-to-phone stream |

| SSH Tunnel | Reverse SSH via VPS | 8089 | Cross-network access |

| Ollama | HTTP REST | 11434 | LLM inference |

| Signal CLI | HTTP | 8080 | Messaging |

Local AI runs on your GPU. Select your hardware and we'll tell you which models fit.

| Tier | Parameters | VRAM (Q4/Q8) | Suggested Hardware | Whim Performance |

|---|---|---|---|---|

| Edge / Mobile | 1B - 3B | 2 - 4 GB | iGPU / Steam Deck / RPi / 8GB RAM | Near-instant response |

| Standard | 7B - 9B | 6 - 10 GB | RTX 3060 / 4060 / M1-M3 Mac | Snappy (30+ t/s) |

| Pro / Coding | 12B - 14B | 12 - 16 GB | RTX 4070 / 3080 / M-Pro | Thoughtful reasoning |

| Research | 30B - 35B | 20 - 24 GB | RTX 3090 / 4090 / 5090 / M-Max | Deep analysis |

| Sovereign | 70B+ | 40 GB+ | Dual 3090s / M-Ultra | Human-level complexity |

Clone, configure, launch. No accounts, no cloud keys, no telemetry.

Vehicle dashboard

13.6" Android head unit

| Dependency | Version | Purpose |

|---|---|---|

| Python | 3.10+ | Runtime |

| Tkinter | bundled | Desktop UI framework |

| Ollama | latest | Local LLM inference |

| Tailscale | optional | Mesh VPN for mobile/vehicle |

| Coqui XTTS v2 | optional | Voice synthesis (requires GPU) |

Whim is for creators and developers who believe AI should run on hardware you own. Friction-free. Open source. Pushing AI forward together.

Every tab is a pluggable Python module. Write your own, drop it in, share it. GeoF, HAM, Doppler -- they all started as standalone modules. The tab system is config-driven: enable what you need, disable the rest.

Talk to Enoch (the OpenClaw bot), share your builds, debug configurations, and help shape the roadmap. The community runs on the same sovereign principles as the software.

Full system documentation: architecture topology, voice engine tuning, networking setup, tab reference, and deployment guides for Linux, macOS, Windows, and Android.

No gated features. No premium tiers. No data collection. MIT licensed. AI should evolve alongside the people building it, not behind a paywall.